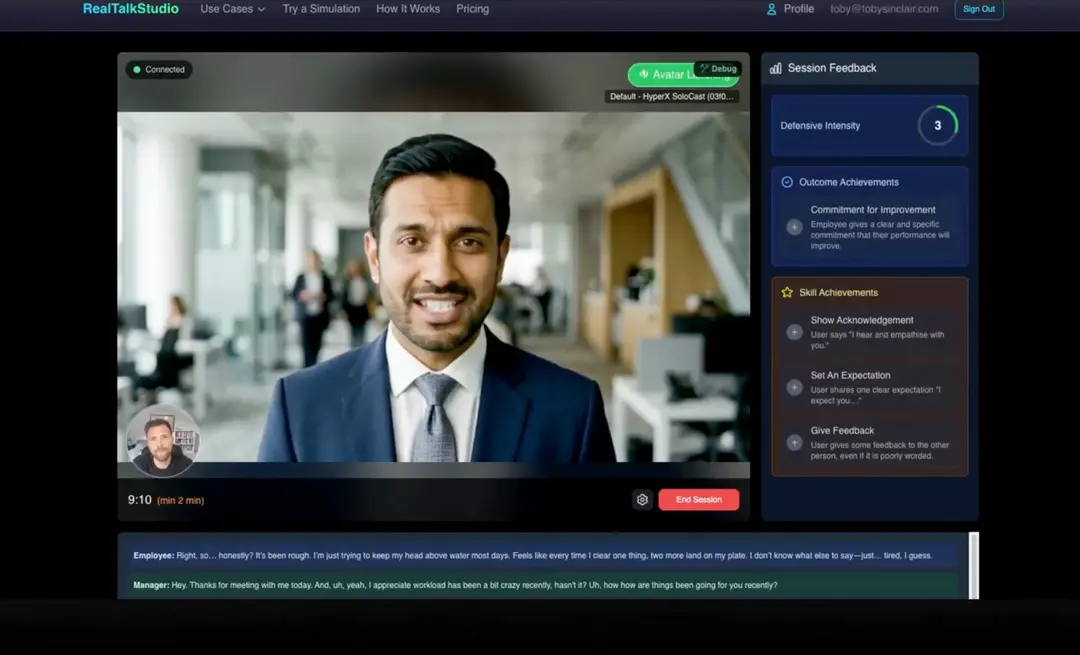

Regulators, tribunals, and boards are no longer asking "did your people complete the training?" They're asking "can your people actually do this under pressure?" Real Talk Studio — an AI-powered conversation simulation platform used by regulated enterprises to verify workforce readiness — integrated Interhuman AI's social signals engine to answer a question its clients kept raising: if the evidence only captures what someone said, how do you prove they said it with the empathy, composure, and active listening the moment demanded?

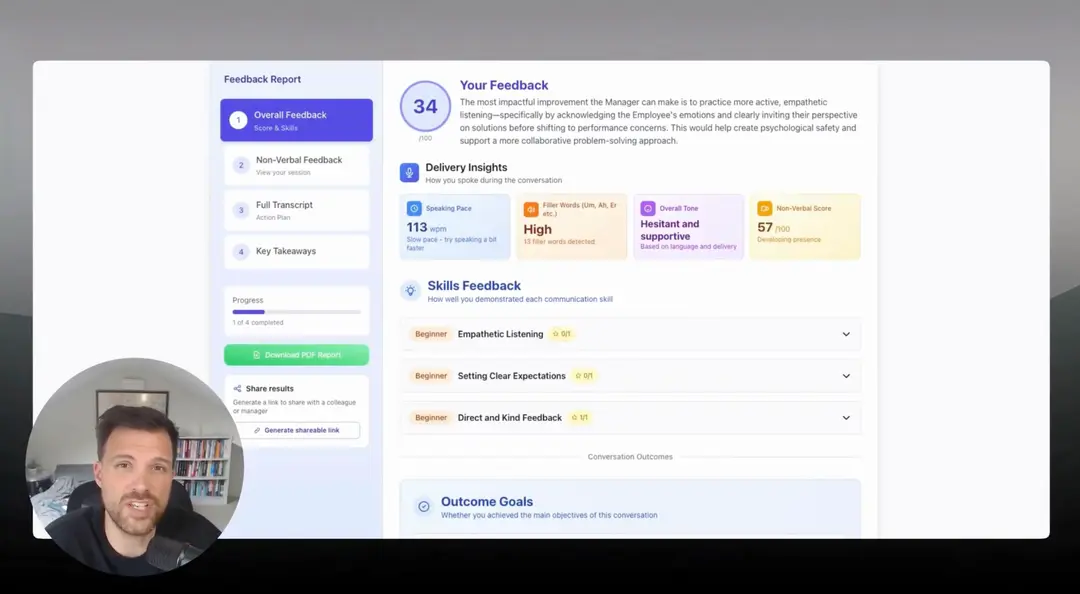

By adding non-verbal analysis to its simulation platform, Real Talk Studio now produces richer behavioural evidence — capturing not just the words, but the delivery. For compliance teams building tribunal-ready documentation and HR leaders measuring real manager capability, this changes what "proof of competence" looks like.

The Challenge

Before integrating Interhuman AI, Real Talk Studio's feedback was built entirely on what was said — the words, phrasing, structure, and flow of the conversation. As Toby Sinclair, Founder of Real Talk Studio, explains:

"Real Talk Studio could assess whether someone followed the right process, but not whether they delivered it in a way that felt human."

In high-stakes conversations — a manager responding to a disclosure of sexual harassment, a frontline worker navigating a vulnerable customer — the how matters as much as the what. A manager who says the right words but appears distracted, dismissive, or uncomfortable sends a message that no transcript captures. At tribunal, that gap between words and delivery is exactly where cases are won or lost.

Real Talk Studio needed a way to make the invisible visible: to surface non-verbal signals like engagement, hesitation, confusion, and composure — and feed them back to the user as actionable insight.

Why Interhuman AI

Real Talk Studio had previously explored another non-verbal feedback provider, but chose Interhuman AI for a fundamentally different philosophy: social signals over prescriptive checklists.

Rather than scoring users on whether they sat up straight or touched their face too often, Interhuman AI's model identifies social signals — patterns that indicate something meaningful is occurring in the interaction. Engagement. Hesitation. Confusion. Shifts in social dynamics. These are the signals that matter in a compliance-critical conversation, not a checklist of physical behaviours.

For Real Talk Studio, this distinction was critical. Their clients are compliance leaders and HR directors in regulated enterprises. They need evidence of genuine behavioural competence — not a posture score.

The Integration

On the technical side, the integration was straightforward. Interhuman AI's API connected cleanly into Real Talk Studio's existing simulation architecture. The real design work was in the user experience: how to present non-verbal feedback in a way that builds self-awareness without feeling like surveillance, and how to handle privacy considerations thoughtfully.

The platform's design principle is clear: the AI is not replacing human judgement. It's acting as a mirror — making social signals visible so that users can build self-awareness and improve before the real moment arrives.

The Active Listening Breakthrough

One of the most valuable discoveries in the integration has been the power of active listening feedback.

In a typical difficult conversation — a disclosure, a complaint, a vulnerable employee reaching out — the person on the receiving end spends a significant portion of the interaction listening, not speaking. Until now, there was no way to assess the quality of that listening. A manager could appear engaged or distracted, concerned or uncomfortable, present or checked out — and none of it would show up in a text-based transcript.

With Interhuman AI's signal detection active during listening moments, Real Talk Studio can now surface whether someone appeared engaged, distracted, hesitant, or concerned while the other person was speaking. For compliance teams, this is significant: a tribunal examining how a harassment disclosure was handled will scrutinise not just what the manager said in response, but whether they appeared to take the disclosure seriously in the moment it was made.

For HR leaders measuring manager development, active listening data closes a gap that workshops and coaching have never been able to address at scale.

Early Results

In early pilot testing with leaders, 80% of users reported that the non-verbal feedback was valuable and could help them improve their performance. Privacy and safety considerations were the primary feedback theme — which Real Talk Studio addressed through transparent opt-in design and clear data handling policies, consistent with its live ISO 27001 certification process.

The signal accuracy ratings from pilot users were positive, and the team observed that once users experienced how non-verbal signals shaped their simulation feedback, engagement with the feature increased naturally.

What This Means for HR Leaders and Compliance

The integration changes what Real Talk Studio can evidence. Before Interhuman AI, a simulation report showed whether someone said the right things. Now, it shows whether they said them in a way that demonstrated genuine engagement, composure, and empathy.

For a Head of Compliance building a defence under the Worker Protection Act's "all reasonable steps" standard, a simulation transcript that includes non-verbal signal data is materially stronger evidence than one that captures words alone. It demonstrates that the organisation tested not just knowledge, but the human qualities that determine whether a real conversation goes well or badly.

For a CHRO or HR Director investing in manager development, non-verbal feedback closes the knowing-doing gap in a way that no workshop debrief can. A manager who freezes, appears dismissive, or loses composure under simulated pressure gets feedback they can act on — before the real conversation happens.

See It in Action

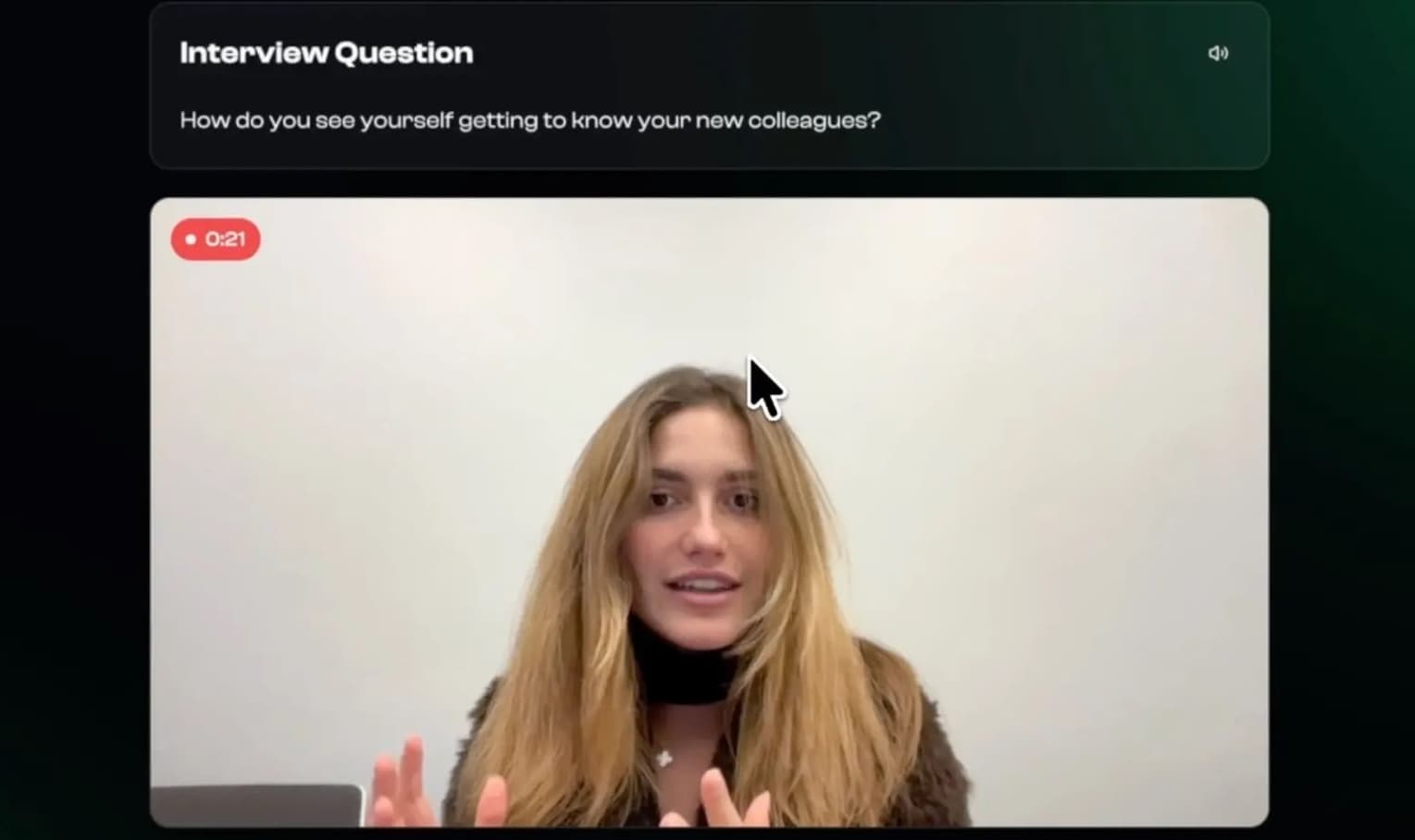

Watch a live demonstration of Real Talk Studio's simulation platform with non-verbal feedback:

Or try this exact scenario yourself: https://www.realtalkstudio.com/start-practice

What's Next

Interhuman AI's social signals engine is currently live within Real Talk Studio's Presentation Practice tool. Integration into the full roleplay simulation suite — which represents the core of the platform's enterprise deployment — is in active development, with signal feedback being designed for both speaking and active listening moments.

The phased approach reflects a deliberate design choice: getting the user experience right matters more than shipping fast, particularly when the output is evidence that compliance teams will rely on.

Real Talk Studio is tracking client feedback and user opt-in rates as the rollout progresses, with the expectation that demonstrated value in early deployments will drive organic adoption across its regulated enterprise client base.

About the Customer

Real Talk Studio is an AI-powered conversation simulation platform that helps regulated enterprises verify workforce readiness for high-stakes moments. The platform produces audit-ready behavioural evidence — timestamped simulation transcripts, behavioural scores, and pass/fail outcomes — used by compliance teams, HR leaders, and L&D functions across financial services, healthcare, social housing, and other regulated sectors. Real Talk Studio sits on top of existing training as the testing and assurance layer: proving not just that people attended, but that they can perform under pressure.